Every specialist now has a second job. It is not in the contract. It is not in the title. It is not in the rate. But it is already part of the work.

The first job stays the same. Defining a system, designing a feature, writing code, planning a campaign, drafting a regulation, structuring requirements, leading a team. The discipline is unchanged, the standards are unchanged, the responsibility is unchanged.

The second job appeared quietly. Operating AI inside the discipline. Choosing what to delegate. Preparing context. Carrying information across tools. Validating output that looks correct but is not. Recovering when one part of the chain breaks. This work is not formally described anywhere. People already do it. They have not named it yet.

This is not a temporary adjustment. It is the new condition of professional work.

The Infrastructure Is Fragmented

AI is everywhere. It remains an incomplete working environment.

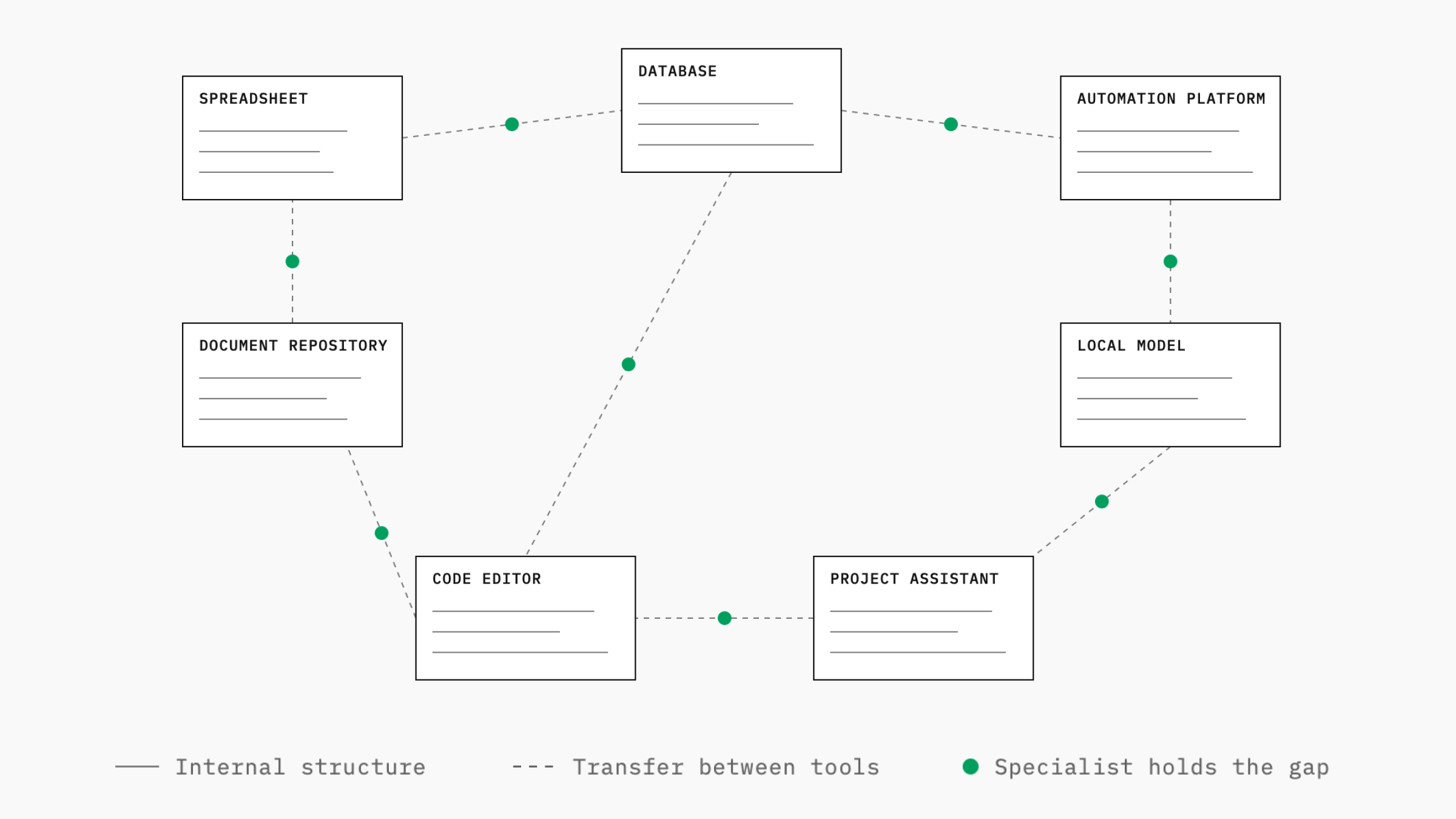

The models are powerful. The tools around them are fragmented. Each tool solves a slice of AI-assisted work and stops at its own boundary. There is no universal layer that makes context portable across professional work.

Information is selected, reformatted, translated, validated, and carried from one stage to another by hand. This work happens between tools, not inside them. It becomes visible when something breaks, and by then it is already part of the routine.

This is the practical environment. Not the marketed version with seamless workflows. The actual version, where every boundary is a point of loss.

The fragmentation may be temporary. The layer of work above the specialist will remain. Better tools reduce friction inside each component. They do not remove the responsibility for what moves between components. That responsibility belongs to the specialist by structural necessity, not by limitation of current technology.

AI work lacks a unified context layer. Specialists hold the fragments together by hand.

The Specialist Becomes the Integration Layer

A specialist working with AI does not just use a tool. They become the part of the system the tools cannot replace.

They define what context the task needs before they begin. They decide which information matters and which information would create noise. They choose where AI helps and where domain judgment must stay in control. They notice when output is plausible but wrong. They recognize when a workflow has silently broken before the result arrives.

This is infrastructure thinking. Not as a narrow technical specialization. As a working method that every specialist now develops inside their own discipline.

A product manager applies it to research, prioritization, stakeholder alignment, and product decisions. A designer applies it to concept exploration, UX logic, design systems, and asset generation. A developer applies it to code, review, debugging, and architecture. A marketer, analyst, researcher, lawyer, doctor, teacher, or architect carries the same problem inside a different professional context.

The specialization stays different. The layer above it converges.

What This Means in Practice

Working at this level changes the structure of daily work.

Each task starts with a context decision before any prompt is written. Naming what the model needs to know. Naming what it must not see. Locating where the information lives. Marking what is confirmed, what is outdated, and what is only an assumption. Defining how the result returns into the system where the work belongs.

Each tool is evaluated by its boundary behavior, not by its core capability alone. A tool that performs well in isolation but traps context inside itself creates friction at every handoff. A tool that performs adequately and keeps context usable across handoffs becomes more valuable for real work.

Each output is treated as part of a chain, not as a final result. Validation happens at the layer where the specialist has responsibility, because no other layer does it for them. AI generates, compares, summarizes, and challenges. It does not know whether the result fits the full professional situation.

Each broken integration becomes a known failure mode, not a surprise. The chain is mapped. The weak points are named. The recovery path is explicit.

This is not extra work added to the discipline. It is the discipline operating honestly in the current environment.

Where This Became Clear to Me

The shift was visible to me in one specific situation. The work required turning fragmented stakeholder input into a coherent architecture for new functionality. There was no clean source of truth for the system. There were comments, assumptions, partial decisions, unclear constraints, changing signals, and dependencies that had to be connected before any product decision could be made.

AI was useful in that work. It helped compare fragments, surface contradictions, structure possible decisions, and locate missing links. It was useful not because it had the answer. It was useful because it operated across several layers of the problem at once.

The value depended on context.

When the context was clear, AI accelerated thinking. When the context was incomplete, the output became shallow, generic, or subtly wrong. The work was not asking better questions. The work was carrying the right context into each step so that AI could operate meaningfully.

That was the practical point where the layer became visible. AI did not reduce the work to prompting. It added a layer above the specialist: context preparation, validation, routing, and recovery. The specialist who handles that layer well moves through complex work faster and with sharper judgment than the same specialist using the same tools without it.

This also connects to what I am building in my own work. I build around the abilities I use: structuring ambiguity, connecting fragments, defining systems, and turning scattered information into something that can support decisions. I do not treat this layer as a fixed answer. I treat it as a working method that has to be tested, adjusted, and improved through use. The tools change. The learning does not stop. For me, openness to new tools is not a slogan. It is how the layer stays operational.

Why This Is a Skill Worth Learning

This layer is easy to misread as overhead. A nuisance until the tools mature.

The tools will mature. The layer will stay.

Defining context, mapping dependencies, designing handoffs, maintaining state across systems, and validating transitions are not temporary skills tied to the current generation of AI. They are the operational form of systems thinking. AI made this layer visible because AI cannot work reliably without it. Strong professional work always required it. It stayed hidden inside experience, judgment, and informal process.

Now it is explicit.

The professionals who build this skill operate inside the new structure of work. They treat AI as a layer placed inside a working system, not as a generator of outputs.

This is one of the few moments where a structural skill, previously invisible inside the work, becomes visible as the work itself.

The Principle

AI did not remove the specialist. It added a new layer above the specialist.

- The model is not the work.

- The prompt is not the system.

- The tool is not the infrastructure.

The work is knowing what context matters, how it moves, where it breaks, what must be validated, and how the result returns into the real professional environment.

Every specialist operates this layer in some form. The ones who treat it as a craft move faster, think more clearly, and produce work the previous generation of tools could not support. The ones who treat it as a temporary inconvenience keep waiting for the tools to solve a problem that belongs to the structure of work itself.

The tools are not the answer. The specialist with infrastructure thinking is.