A reflection on building EngMe from zero, and what the same work looks like now, with AI as a tool inside the process.

Starting Without a System

When I joined EngMe, there was no product. There was an idea to help people learn English through video, and nothing else. No users, no data, no validated assumptions, no clear sense of what had to be built.

This is the point most teams underestimate. The problem at zero is not a missing feature set or a missing design. The problem is the absence of a system. There is nothing to operate within, nothing to validate against, and no stable model to rely on.

In such conditions, the work does not begin with solutions. It begins with defining the environment in which a solution can exist. Today, AI can quickly generate hypotheses about users, markets, and behaviors. It can cluster signals and suggest directions within minutes. But these outputs are only useful if there is already a structure to evaluate them against. Without that structure, they remain noise. The first step has not changed. Someone still has to define the boundaries of the system and decide what is worth exploring at all.

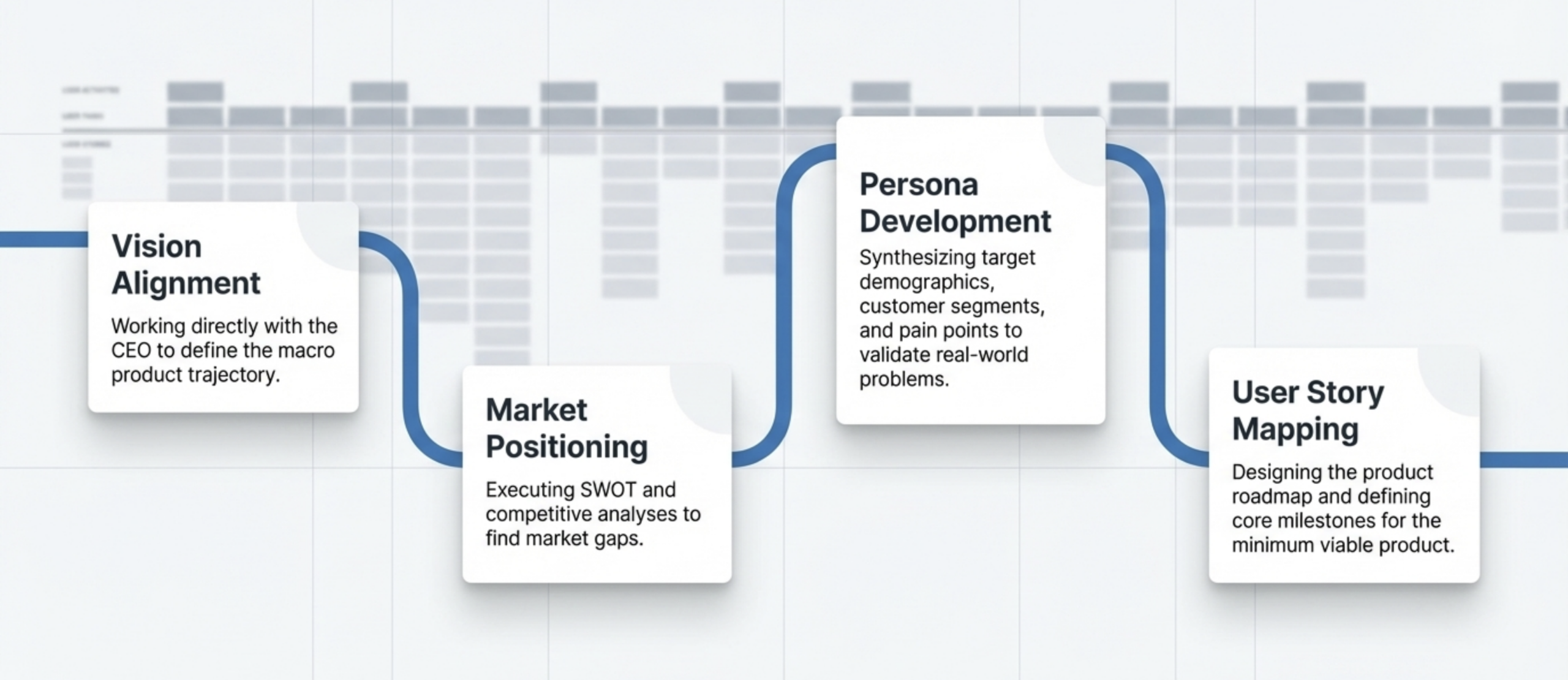

Building a Model Instead of Searching for Answers

What looks like standard product work, research, personas, business modeling, was not really about validation in this case. It was about building a coordinate system. I needed to define who the user is in this specific context, what learning actually means for them, where existing solutions fail, and which behavior the product is trying to change. This was not discovery in the classical sense. It was system construction.

AI accelerates this phase significantly. It can summarize competitors, cluster patterns, and surface common approaches across a market in a fraction of the time it used to take. It lowers the cost of gathering and structuring information. What it does not do is decide what matters. It does not resolve contradictions between business intent and user behavior. The model still has to be constructed deliberately. The goal is not to be right. The goal is to build a model that can survive contact with reality.

MVP as a System Probe

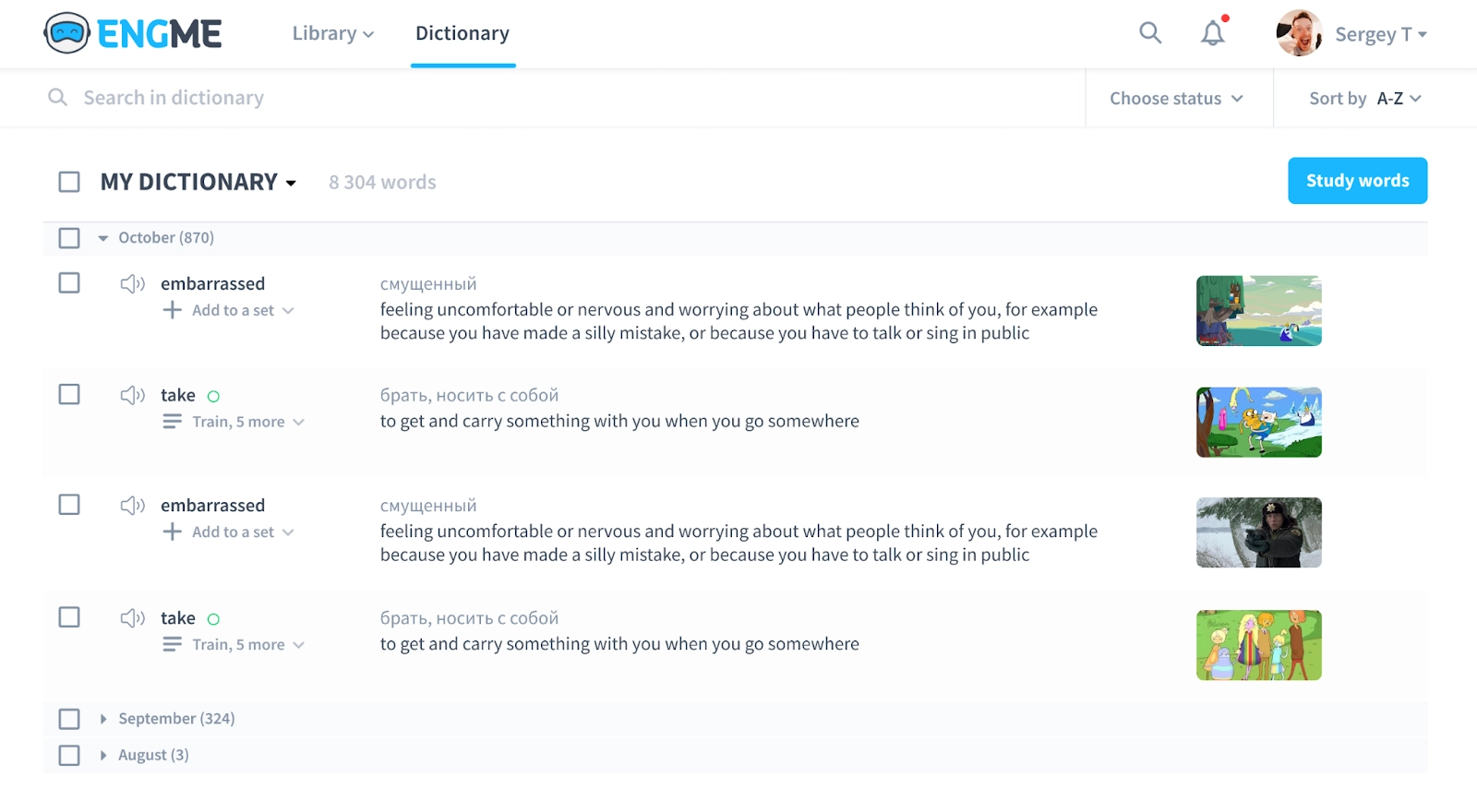

The first version of the product included a dictionary, a video player, curated content, and basic user actions. It may look like a minimal feature set, but that framing is misleading. This was not a product. It was an instrument designed to test whether the model holds.

We were not measuring success metrics yet. We were observing system behavior. Where users stop, where they repeat actions, where the flow breaks, and where the logic does not match expectations.

AI today can help simulate flows, generate edge cases, and predict possible failure points before the MVP is even in users’ hands. It accelerates iteration cycles and shortens the distance between an assumption and its first stress test. But simulation does not replace real interaction. The MVP still exists to confront assumptions with actual behavior, not with predicted behavior.

Reframing the Problem

At first, the problem seemed obvious. Users need to learn words. But observation showed quickly that access to words was not the issue. Retention was.

This shift moves the problem out of interface and content, into time and memory. The product is no longer about displaying information. It becomes about managing how knowledge evolves over time inside the user.

AI can surface similar insights by analyzing large datasets and research. It can suggest that retention is a critical factor in any learning system. What it does not do is decide that this specific insight applies to this specific product, and then rebuild the system around it. That translation still belongs to the person holding the model.

Designing the Learning Logic

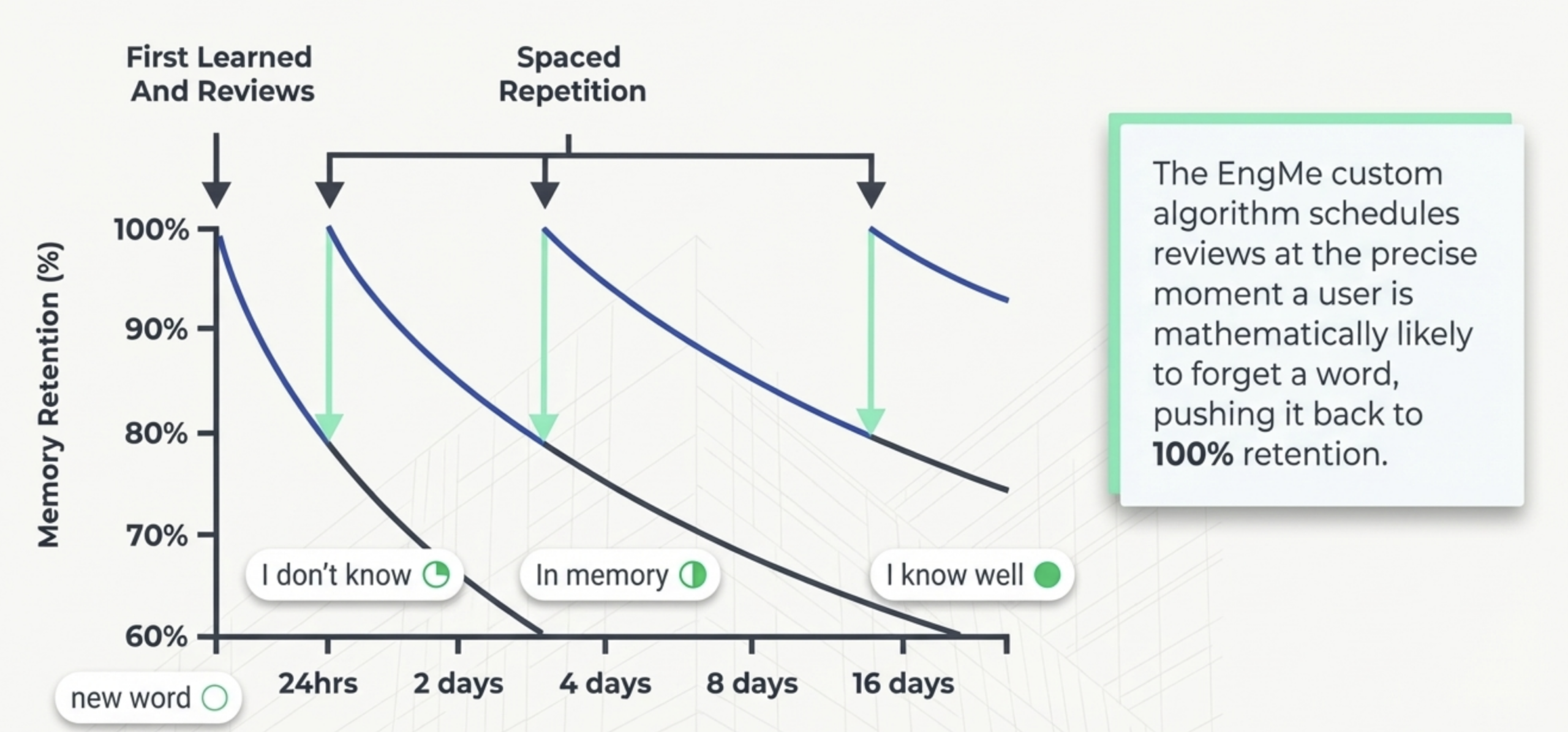

I used spaced repetition as the conceptual foundation. Selecting the method was the easy part. The real work was formalizing it into a system the product could actually run.

I treated each word as an entity with a defined state:

- new, the word has just entered the user’s vocabulary,

- don’t know, the user has seen the word but cannot recall its meaning reliably,

- in memory, the user recognizes the word under review conditions,

- know well, the word has been retained across multiple intervals.

Each state carried a time interval, a retention expectation, and a review rule, grounded in the forgetting curve. A word does not stay in a state. It moves between states based on user interaction, elapsed time, and the outcome of each review cycle. The system recalculates what should happen to every word after every touch.

This is where the work becomes structural rather than conceptual. Defining the states is not enough. The harder part is defining the transitions: what moves a word forward, what sends it back, what happens when the user is inactive for longer than the interval allows, what happens when a review is inconclusive. Each rule has to be consistent with every other rule, otherwise the system produces contradictions the user feels as «this app is wrong about me».

AI today can assist in modeling systems like this. It can simulate transitions, suggest interval tuning, and explore variations of the algorithm across thousands of synthetic users. What it still does not do is define the structure itself. It operates inside the rules, not above them. The definition of states, transitions, and constraints is what makes the system coherent in the first place, and that definition belongs to the product architect.

Where the System Breaks

The complexity did not come from the idea. It came from making the system executable. The main challenge was determining the actual state of a word. A state cannot be observed directly. It has to be inferred from behavior: from timing, from past interactions, from how the user responds during review.

AI can help analyze patterns and estimate the probability of a given state. It can find inconsistencies and gaps in the logic faster than a manual review. What it does not do is remove the uncertainty. The system still has to operate under incomplete information. This is where product work becomes structural. The task is not to eliminate uncertainty, it is to define rules that function inside it without collapsing.

Constraints as a Design Tool

Technical limitations made a fully adaptive system impossible. The model had to be simplified without breaking its logic. That required deciding what stays fixed, what can vary, and how much complexity the system can actually sustain in production.

AI can propose more sophisticated and adaptive models on demand. It can suggest additional personalization layers without much effort. But every additional layer introduces cost, instability, and risk. A significant part of the product definition layer is deciding what should not be implemented. The final system was not the most advanced version of the idea. It was the most stable version the constraints allowed, and stability was the feature.

What This Work Actually Is

From the outside, the process can look like a familiar sequence: research, MVP, iteration, feature development. In reality, none of these labels describe the core work. What was happening was the gradual removal of ambiguity and the transformation of an abstract idea into a structured system with defined behavior.

AI supports this process by accelerating documentation, structuring requirements, and aligning information across stakeholders. It improves speed and clarity at every step. What it does not replace is the need to define the system itself. That is the layer where business intent, user needs, technical constraints, and implementation logic have to converge into a single coherent model. After launch, users stayed on the platform longer and engaged with content more consistently, not because of a single feature, but because the system behind those features was internally consistent.

The Core Skill

Working from zero is not about handling missing information. It is about the ability to create a coherent system before that information exists: to define structure, introduce constraints, and establish the rules that make the system operable.

AI makes this process faster. It reduces friction in analysis, synthesis, and validation. It does not change the nature of the work. The system still has to be defined by someone who understands how its parts interact and where its boundaries lie.

Everything else comes after.